This video shows a cheap RC car hacked to be controlled by the Raspberry PI. It is completely autonomous, it uses a webcam to turn and point the light. The computation is made with the OpenCV libraries.

Category: Video

Raspberry PI as FM AUX input for your car radio

When i read about turning your Raspberry PI into a FM transmitter i was really excited. That’s real hacking!

I decided to try to use this hack to provide an aux input for car radio which doesn’t have it, and i succeeded (quite well).

I downloaded the FM transmitter C program from the link above and figured out a way to drive my usb audio card mic input to the program, to be able to broadcast that in FM: wonderful.

The result was a short range Raspberry PI powered FM transmitter, and i was able to tune my radio to the right frequency (in this case 100.00 MHz) and to listen for the music i was playing from my device.

Since there has been some interest about how I made this, I post here the little scripts I wrote for this project, even if they are not written very well, and may not be easy to understand and adapt to your needs. Here they are:

https://drive.google.com/folderview?id=0B8r7_UiGnBnUUW9zMXVpMjB1akU&usp=sharing

Raspberry PI powered Face Follower Webcam

Hi everybody, here’s my new Raspberry PI project: the face follower webcam!

When I received my first raspberry, I understood it would have been very funny to play with real world things: so i tried to make the PI react with environment, and I played a lot with speech recognition, various kind of sensors and so on. But then I suddenly realized that the real funny thing would have been to make it see. I also understood that real-time processing a webcam input would be a tough task for a device with those tiny resources.

Now i discovered that this is a tough task, but the raspberry can achieve it, as long as we keep things simple.

OpenCV Libraries

As soon as I started playing with webcams, I decided to look for existing libraries to parse the webcam input and to extract some information from it. I soon came across the OpenCV libraries, beautiful libraries to process images from the webcam with a lot of features (which I didn’t fully explore yet), such as face detection.

I started trying to understand if it was possible to make them work on the PI, and yes, someone had already done that, with python too, and it was as easy as a

sudo apt-get install python-opencv

After some tries, i found out that the pi was slow in processing the frames to detect faces, but only because it buffered the frames and I soon had a workaround for that.. and soon I was done: face detection on raspberry PI!

All you need to try face detection on your own is the python-opencv package and the pattern xml file haarcascade_frontalface_alt.xml, i guess you can find it easily in the Internet.

I started with a script found here and then I modified it for my purposes.

The Project

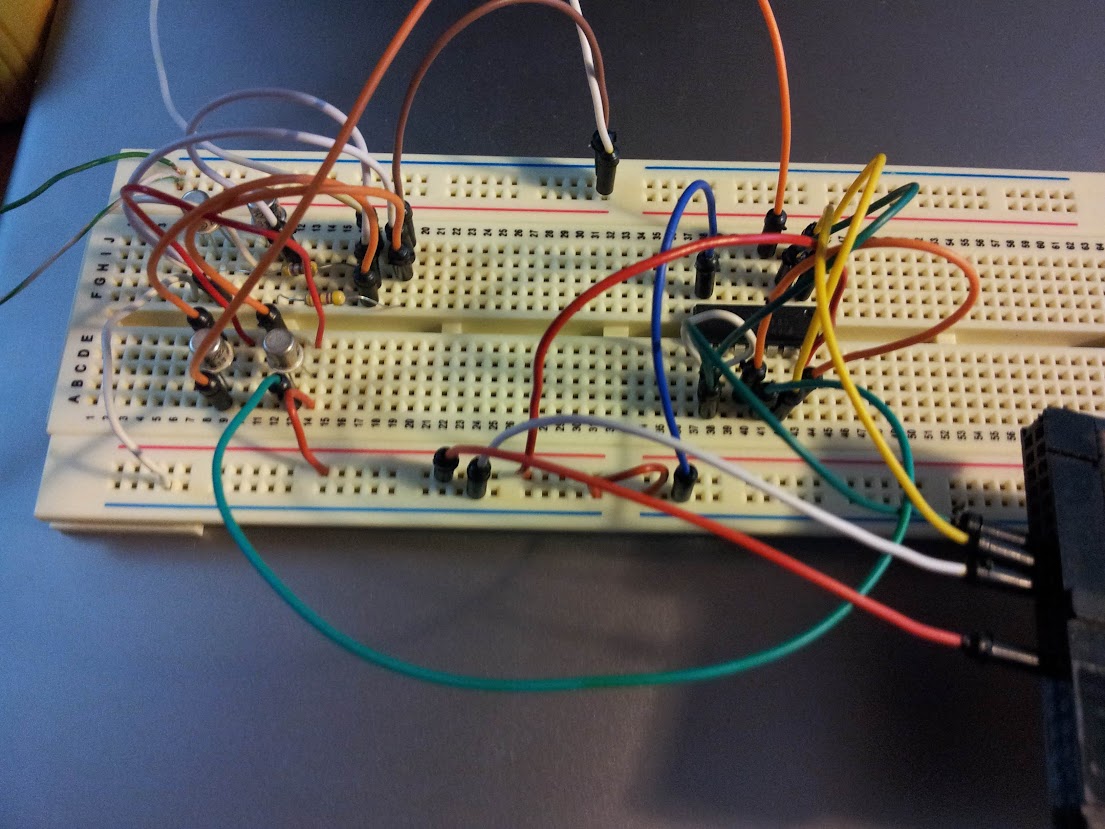

Eventually, I decided to build with my PI a motor driven webcam which could “follow” a face detected by opencv in the webcam stream. I disassembled a lot of things before finding a suitable motor but then I managed to connect it to the Raspberry GPIO (I had to play a little with electronics here, because i didn’t have a servo motor — if you need some information about this part, i’ll be happy to provide it, but i’m not so good with electronics thus the only thing I can say is that it works). Here’s a picture of the circuit:

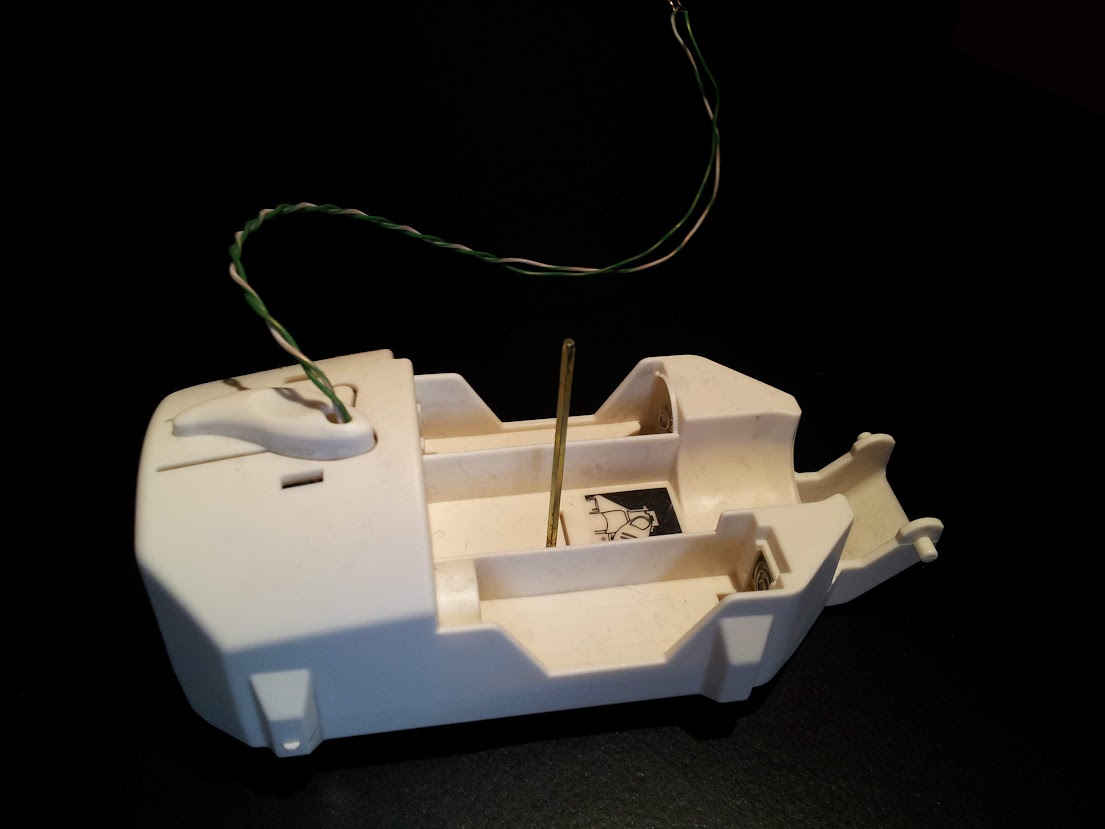

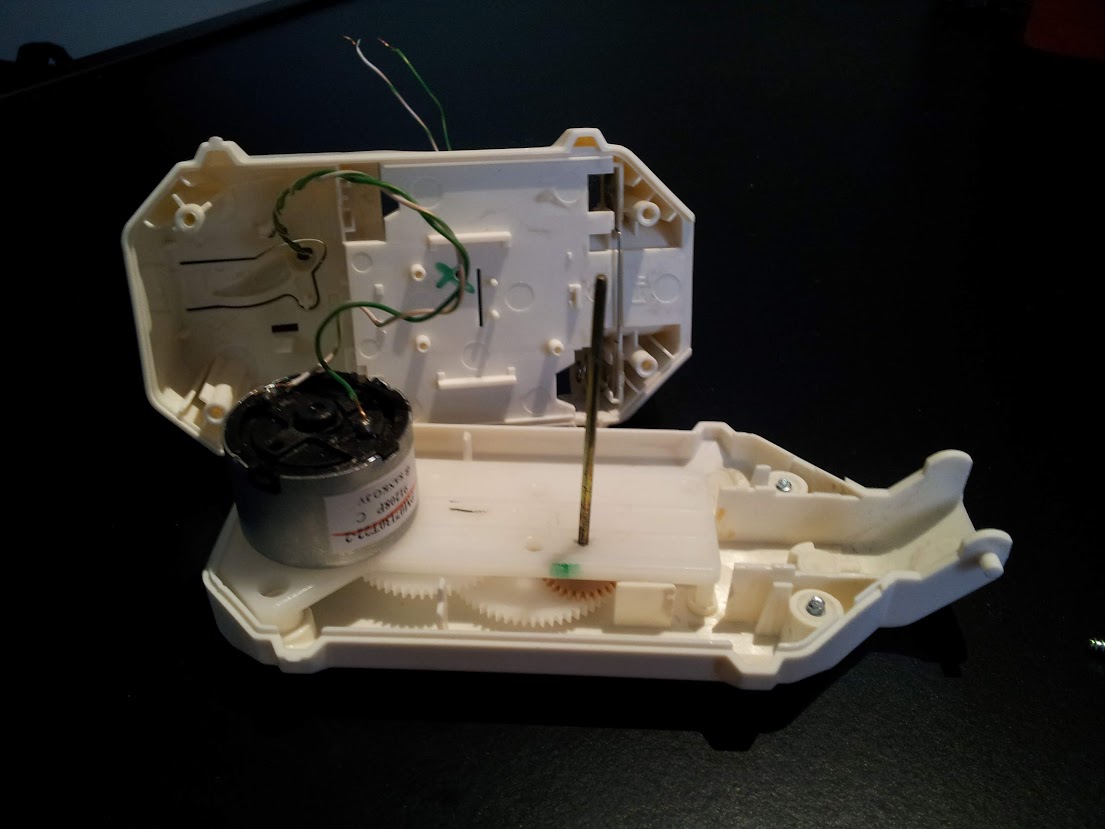

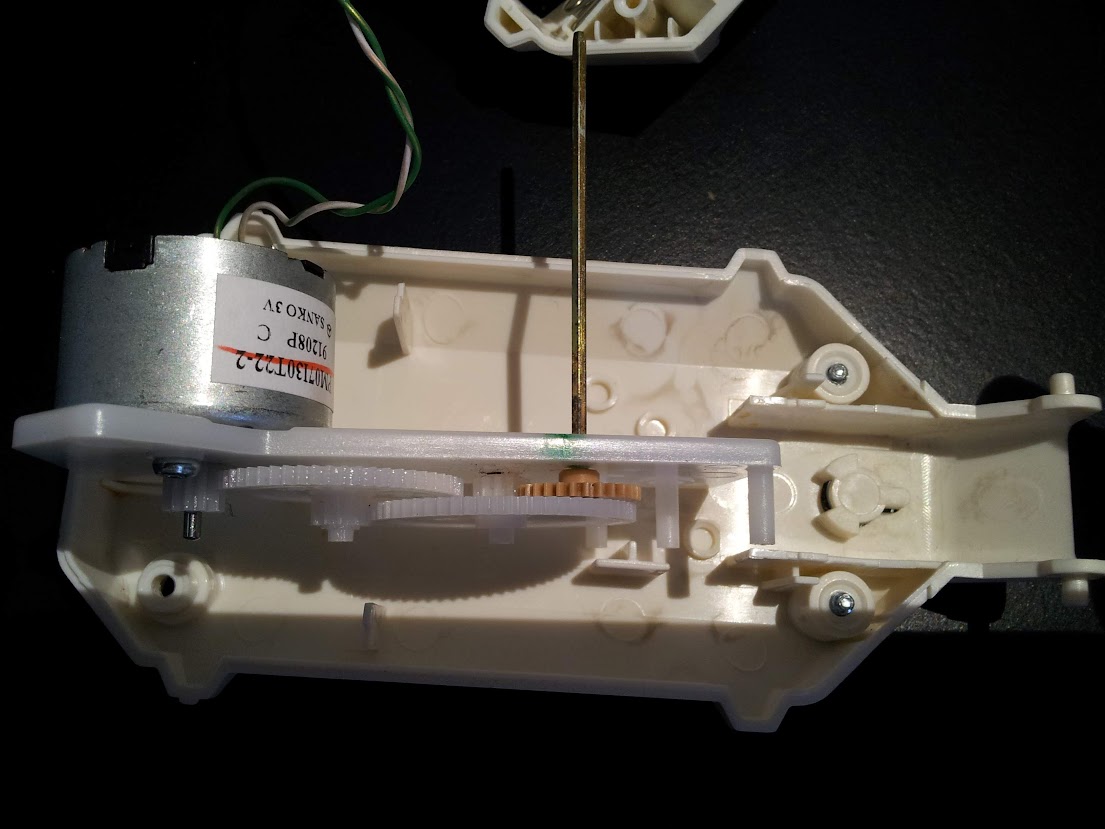

And here are some photos of the motor, to which I mounted an additional gear wheel.

Once the motor worked, I attached it to a webcam which i plugged into the PI, and then I joined the previous linked script with some GPIO scripting to achieve the goal, here is the result:

import RPi.GPIO as GPIO

import time,sys

import cv,os

from datetime import datetime

import Image

#GPIO pins i used

OUT0 = 11

OUT1 = 13

out0 = False #!enable line: when it's false, the motor turns, when it's true, it stops

out1 = False #!the direction the motor turns (clockwise or counter clockwise, it depends on the circuit you made)

def DetectFace(image, faceCascade):

min_size = (20,20)

image_scale = 2

haar_scale = 1.1

min_neighbors = 3

haar_flags = 0

# Allocate the temporary images

grayscale = cv.CreateImage((image.width, image.height), 8, 1)

smallImage = cv.CreateImage(

(

cv.Round(image.width / image_scale),

cv.Round(image.height / image_scale)

), 8 ,1)

# Convert color input image to grayscale

cv.CvtColor(image, grayscale, cv.CV_BGR2GRAY)

# Scale input image for faster processing

cv.Resize(grayscale, smallImage, cv.CV_INTER_LINEAR)

# Equalize the histogram

cv.EqualizeHist(smallImage, smallImage)

# Detect the faces

faces = cv.HaarDetectObjects(

smallImage, faceCascade, cv.CreateMemStorage(0),

haar_scale, min_neighbors, haar_flags, min_size

)

# If faces are found

if faces:

#os.system("espeak -v it salve")

for ((x, y, w, h), n) in faces:

return (image_scale*(x+w/2.0)/image.width, image_scale*(y+h/2.0)/image.height)

# the input to cv.HaarDetectObjects was resized, so scale the

# bounding box of each face and convert it to two CvPoints

pt1 = (int(x * image_scale), int(y * image_scale))

pt2 = (int((x + w) * image_scale), int((y + h) * image_scale))

cv.Rectangle(image, pt1, pt2, cv.RGB(255, 0, 0), 5, 8, 0)

return False

def now():

return str(datetime.now());

def init():

GPIO.setmode(GPIO.BOARD)

GPIO.setup(OUT0, GPIO.OUT)

GPIO.setup(OUT1, GPIO.OUT)

stop()

def stop(): #stops the motor and return when it's stopped

global out0

if out0 == False: #sleep only if it was moving

out0 = True

GPIO.output(OUT0,out0)

time.sleep(1)

else:

out0 = True

GPIO.output(OUT0,out0)

def go(side, t): #turns the motor towards side, for t seconds

print "Turning: side: " +str(side) + " time: " +str(t)

global out0

global out1

out1 = side

GPIO.output(OUT1,out1)

out0 = False

GPIO.output(OUT0,out0)

time.sleep(t)

stop()

#getting camera number arg

cam = 0

if len(sys.argv) == 2:

cam = sys.argv[1]

#init motor

init()

capture = cv.CaptureFromCAM(int(cam))

#i had to take the resolution down to 480x320 becuase the pi gave me errors with the default (higher) resolution of the webcam

cv.SetCaptureProperty(capture, cv.CV_CAP_PROP_FRAME_WIDTH, 480)

cv.SetCaptureProperty(capture, cv.CV_CAP_PROP_FRAME_HEIGHT, 320)

#capture = cv.CaptureFromFile("test.avi")

#faceCascade = cv.Load("haarcascades/haarcascade_frontalface_default.xml")

#faceCascade = cv.Load("haarcascades/haarcascade_frontalface_alt2.xml")

faceCascade = cv.Load("/usr/share/opencv/haarcascades/haarcascade_frontalface_alt.xml")

#faceCascade = cv.Load("haarcascades/haarcascade_frontalface_alt_tree.xml")

while (cv.WaitKey(500)==-1):

print now() + ": Capturing image.."

for i in range(5):

cv.QueryFrame(capture) #this is the workaround to avoid frame buffering

img = cv.QueryFrame(capture)

hasFaces = DetectFace(img, faceCascade)

if hasFaces == False:

print now() + ": Face not detected."

else:

print now() + ": " + str(hasFaces)

val = abs(0.5-hasFaces[0])/0.5 * 0.3

#print "moving for " + str(val) + " secs"

go(hasFaces[0] < 0.5, val)

#cv.ShowImage("face detection test", image)

Of course I had to play with timing to make the webcam turn well, and the timings like everything strictly connected to the motor depends on your specific motor and/or circuit.

Here’s the video (in italian):

Raspberry PI controlled webcam turning system

I’m writing to present my new Raspberry PI powered project, a simple motor controlled directly from the Raspberry’s GPIO. I’m very excited to think about the raspberry actually moving things.

My initial idea was building a webcam which could rotate to follow a face or an object, but i believe the Raspberry PI’s CPU is too limited for this purpose. Anyway i decided to try to make a webcam turning system which still is pretty cool.

To do that I disassembled an old cd player, and used the laser moving motor system and some other recovered pieces to make it turn the webcam.

The following video (in italian) shows how it worked (not really well but hey, it does turn the webcam!).

The interesting thing in this project is that this kind of motors (from an old cd player) are capable of turning a webcam (which had been reduced in weight as much as i could) being powered only by the GPIO port. Not the 3V or 5V pins, which provide pretty much current, but the very GPIO pins of the board.

At this stage it is of course completely unusable, but like almost every project i’ve made so far, it just wants to be a proof of concept.

Raspberry PI powered Door Alarm

This is my last Raspberry PI project. It’s a simple alarm driven by a home-made door sensor which plays an alarm sound and sends me a message via twitter.

The video is in italian.

Here’s the python code which uses the tweepy library:

#!/usr/bin/python

# Import modules for CGI handling

import cgi, cgitb, time

import RPi.GPIO as GPIO

import os

import tweepy

import traceback

#fill these variables with your twitter app data

oauth_token = **

oauth_token_secret = **

consumer_key = **

consumer_secret = **

auth = tweepy.OAuthHandler(consumer_key, consumer_secret)

auth.set_access_token(oauth_token, oauth_token_secret)

api = tweepy.API(auth)

def send_tweet( api, what ):

try:

print "Tweeting '"+what+"'.."

now = int(time.time())

api.update_status('@danielenick89 '+ str(now) +': '+ what)

print "Tweet id "+str(now)+ " sent"

except:

print "Error while sending tweet"

traceback.print_exc()

GPIO.setmode(GPIO.BOARD)

GPIO.setup(11, GPIO.IN)

state = GPIO.input(11)

#print "Content-type: text/htmlnn";

while not GPIO.input(11):

time.sleep(0.3)

#print "Activated.."

while GPIO.input(11):

time.sleep(0.3)

send_tweet(api, "RasPI: Unauthorized door opening detected.")

os.system("mplayer alarm2/alarm.mp3");

In order to use this code you have to create an application on twitter, and then fill the variables (oauth_token, oauth_token_secret, consumer_key, consumer_secret) with the relative value you can find in the site after creating the app.

Raspberry PI controlled Light Clapper

This is one of my first Raspberry PI projects, and consists of a Raspberry connected to a microphone which detects an hand clap and then controls via GPIO a relay that powers the lamp.

The code used for the detecting the clap (which is not perfect, because it analyzes only the volume of the microphone) is this:

[actually this is not the same code used in the video above, it has been improved, in fact the delay between the two claps is reduced in this script]

The code has been written only to provide a proof-of-concept project, and it’s written joining various pieces of code found in the Internet, so don’t expect this code to be bug free or well written. It is not.

You should use it to understand the way it works and to write something better or to adapt it to your own project.

#!/usr/bin/python

import urllib

import urllib2

import os, sys

import ast

import json

import os

import getpass, poplib

from email import parser

import RPi.GPIO as GPIO

file = 'test.flac'

import alsaaudio, time, audioop

class Queue:

"""A sample implementation of a First-In-First-Out

data structure."""

def __init__(self):

self.size = 35

self.in_stack = []

self.out_stack = []

self.ordered = []

self.debug = False

def push(self, obj):

self.in_stack.append(obj)

def pop(self):

if not self.out_stack:

while self.in_stack:

self.out_stack.append(self.in_stack.pop())

return self.out_stack.pop()

def clear(self):

self.in_stack = []

self.out_stack = []

def makeOrdered(self):

self.ordered = []

for i in range(self.size):

#print i

item = self.pop()

self.ordered.append(item)

self.push(item)

if self.debug:

i = 0

for k in self.ordered:

if i == 0: print "-- v1 --"

if i == 5: print "-- v2 --"

if i == 15: print "-- v3 --"

if i == 20: print "-- v4 --"

if i == 25: print "-- v5 --"

for h in range(int(k/3)):

sys.stdout.write('#')

print ""

i=i+1

def totalAvg(self):

tot = 0

for el in self.in_stack:

tot += el

for el in self.out_stack:

tot += el

return float(tot) / (len(self.in_stack) + len(self.out_stack))

def firstAvg(self):

tot = 0

for i in range(5):

tot += self.ordered[i]

return tot/5.0

def secondAvg(self):

tot = 0

for i in range(5,15):

tot += self.ordered[i]

return tot/10.0

def thirdAvg(self):

tot = 0

for i in range(15,20):

tot += self.ordered[i]

return tot/5.0

def fourthAvg(self):

tot = 0

for i in range(20,30):

tot += self.ordered[i]

return tot/10.0

def fifthAvg(self):

tot = 0

for i in range(30,35):

tot += self.ordered[i]

return tot/5.0

def wait_for_sound():

GPIO.setmode(GPIO.BOARD)

GPIO.setup(11, GPIO.OUT)

# Open the device in nonblocking capture mode. The last argument could

# just as well have been zero for blocking mode. Then we could have

# left out the sleep call in the bottom of the loop

card = 'sysdefault:CARD=Microphone'

inp = alsaaudio.PCM(alsaaudio.PCM_CAPTURE,alsaaudio.PCM_NONBLOCK, card)

# Set attributes: Mono, 8000 Hz, 16 bit little endian samples

inp.setchannels(1)

inp.setrate(16000)

inp.setformat(alsaaudio.PCM_FORMAT_S16_LE)

# The period size controls the internal number of frames per period.

# The significance of this parameter is documented in the ALSA api.

# For our purposes, it is suficcient to know that reads from the device

# will return this many frames. Each frame being 2 bytes long.

# This means that the reads below will return either 320 bytes of data

# or 0 bytes of data. The latter is possible because we are in nonblocking

# mode.

inp.setperiodsize(160)

last = 0

max = 0

clapped = False

out = False

fout = open("/var/www/cgi-bin/clapper/killme", "w")

fout.write('0n');

fout.close()

queue = Queue();

avgQueue = Queue();

n = 0;

n2=0;

while True:

fin = open("/var/www/cgi-bin/clapper/killme", "r")

if fin.readline() == "1n":

break;

fin.close()

# Read data from device

l,data = inp.read()

if l:

err = False

volume = -1

try:

volume = audioop.max(data, 2)

except:

print "err";

err = True

if err: continue

queue.push(volume)

avgQueue.push(volume)

n = n + 1

n2 = n2 + 1

if n2 > 500:

avgQueue.pop()

if n > queue.size:

avg = avgQueue.totalAvg()

print "avg last fragments: " + str(avg)

low_limit = avg + 10

high_limit = avg + 30

queue.pop();

queue.makeOrdered();

v1 = queue.firstAvg();

v2 = queue.secondAvg();

v3 = queue.thirdAvg();

v4 = queue.fourthAvg();

v5 = queue.fifthAvg();

if False:

print "v1: "+str(v1)+"n"

print "v2: "+str(v2)+"n"

print "v3: "+str(v3)+"n"

print "v4: "+str(v4)+"n"

print "v5: "+str(v5)+"n"

#if v1 < low_limit: print str(n)+": v1 ok" #if v2 > high_limit: print str(n)+": v2 ok"

#if v3 < low_limit: print str(n)+": v3 ok" #if v4 > high_limit: print str(n)+": v4 ok"

#if v5 < low_limit: print str(n)+": v5 ok"

if v1 < low_limit and v2 > high_limit and v3 < low_limit and v4 > high_limit and v5 < low_limit:

print str(time.time())+": sgaMED"

out = not out

GPIO.output(11, out)

queue.clear()

n = 0

time.sleep(.01)

wait_for_sound()

The code was found on the Internet and then adapted for my purposes. It uses a standard USB microphone, and should work with most of the linux-compatible USB mics (I think even webcam integrated mics).

Infrared Webcam / Lamp project

I just modified a normal webcam to work with infrared light, following these instructions: http://www.hoagieshouse.com/IR/

Then i created a IR lamp using an incandescent light bulb (which provides almost all frequencies of light) which i filtered with some old photos negatives, which are a quite good IR-pass filter.

The video is in italian.