Hi everybody, here’s my new Raspberry PI project: the face follower webcam!

When I received my first raspberry, I understood it would have been very funny to play with real world things: so i tried to make the PI react with environment, and I played a lot with speech recognition, various kind of sensors and so on. But then I suddenly realized that the real funny thing would have been to make it see. I also understood that real-time processing a webcam input would be a tough task for a device with those tiny resources.

Now i discovered that this is a tough task, but the raspberry can achieve it, as long as we keep things simple.

OpenCV Libraries

As soon as I started playing with webcams, I decided to look for existing libraries to parse the webcam input and to extract some information from it. I soon came across the OpenCV libraries, beautiful libraries to process images from the webcam with a lot of features (which I didn’t fully explore yet), such as face detection.

I started trying to understand if it was possible to make them work on the PI, and yes, someone had already done that, with python too, and it was as easy as a

sudo apt-get install python-opencv

After some tries, i found out that the pi was slow in processing the frames to detect faces, but only because it buffered the frames and I soon had a workaround for that.. and soon I was done: face detection on raspberry PI!

All you need to try face detection on your own is the python-opencv package and the pattern xml file haarcascade_frontalface_alt.xml, i guess you can find it easily in the Internet.

I started with a script found here and then I modified it for my purposes.

The Project

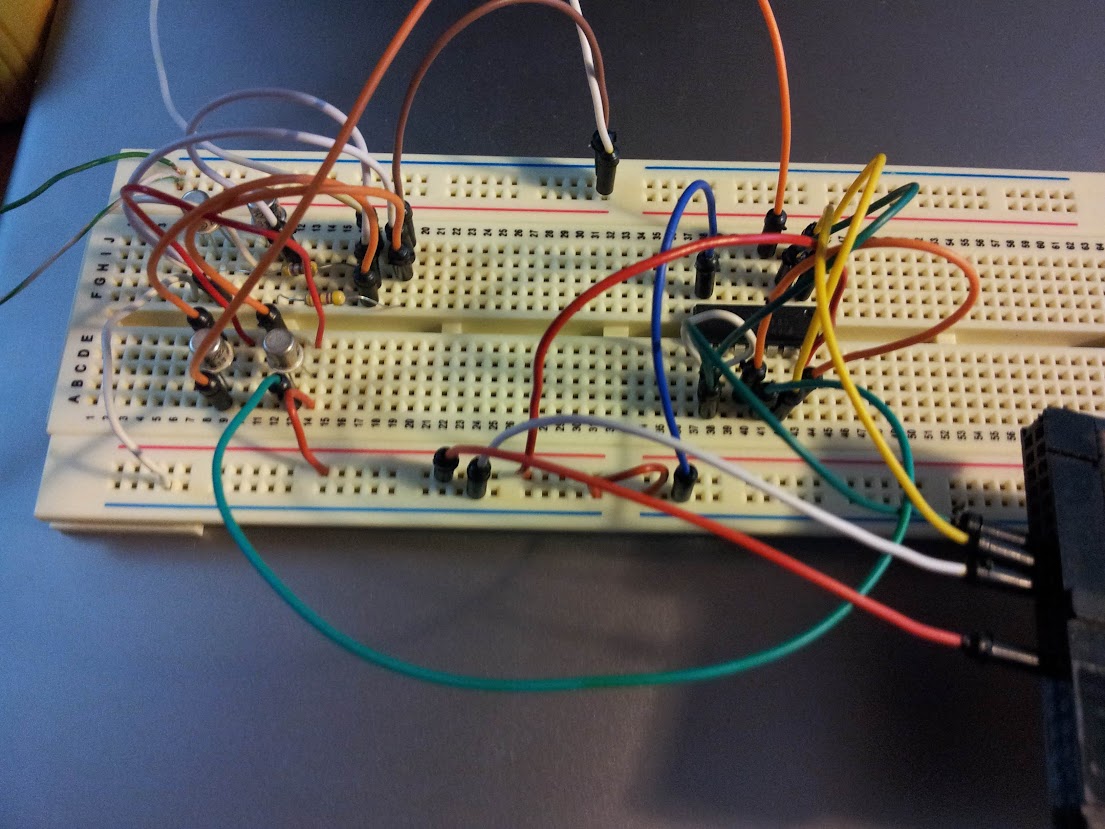

Eventually, I decided to build with my PI a motor driven webcam which could “follow” a face detected by opencv in the webcam stream. I disassembled a lot of things before finding a suitable motor but then I managed to connect it to the Raspberry GPIO (I had to play a little with electronics here, because i didn’t have a servo motor — if you need some information about this part, i’ll be happy to provide it, but i’m not so good with electronics thus the only thing I can say is that it works). Here’s a picture of the circuit:

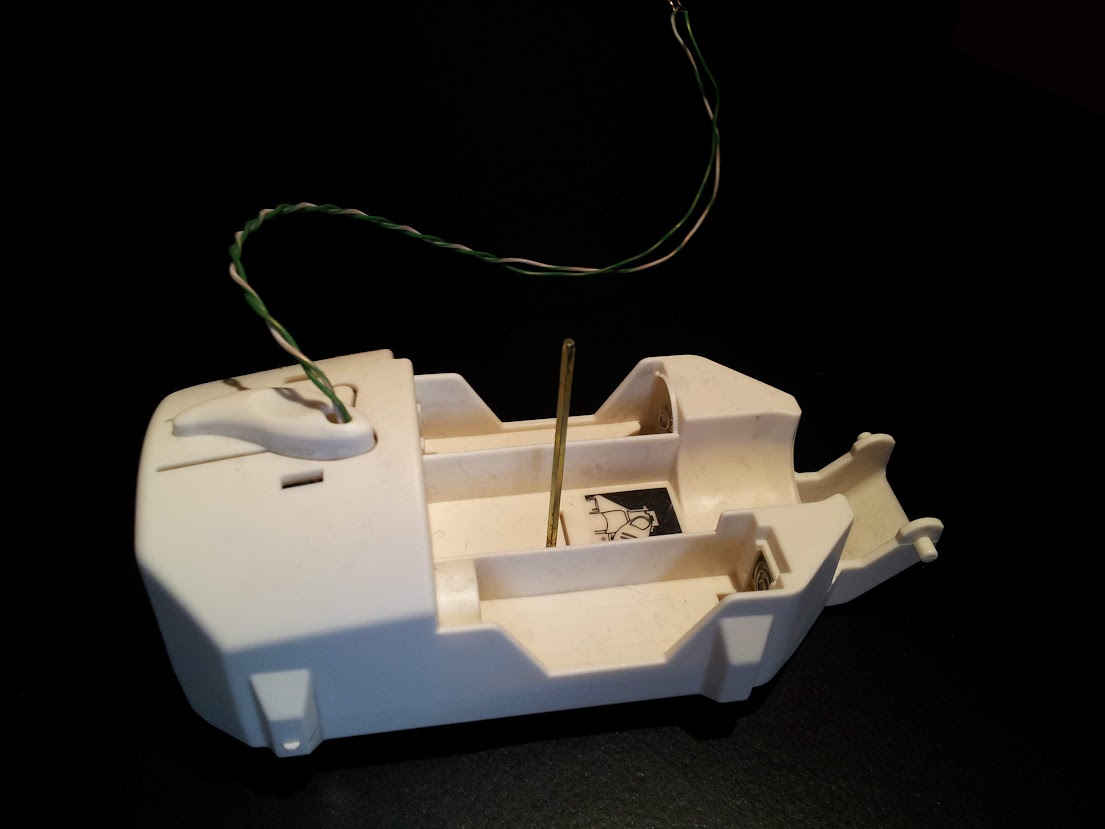

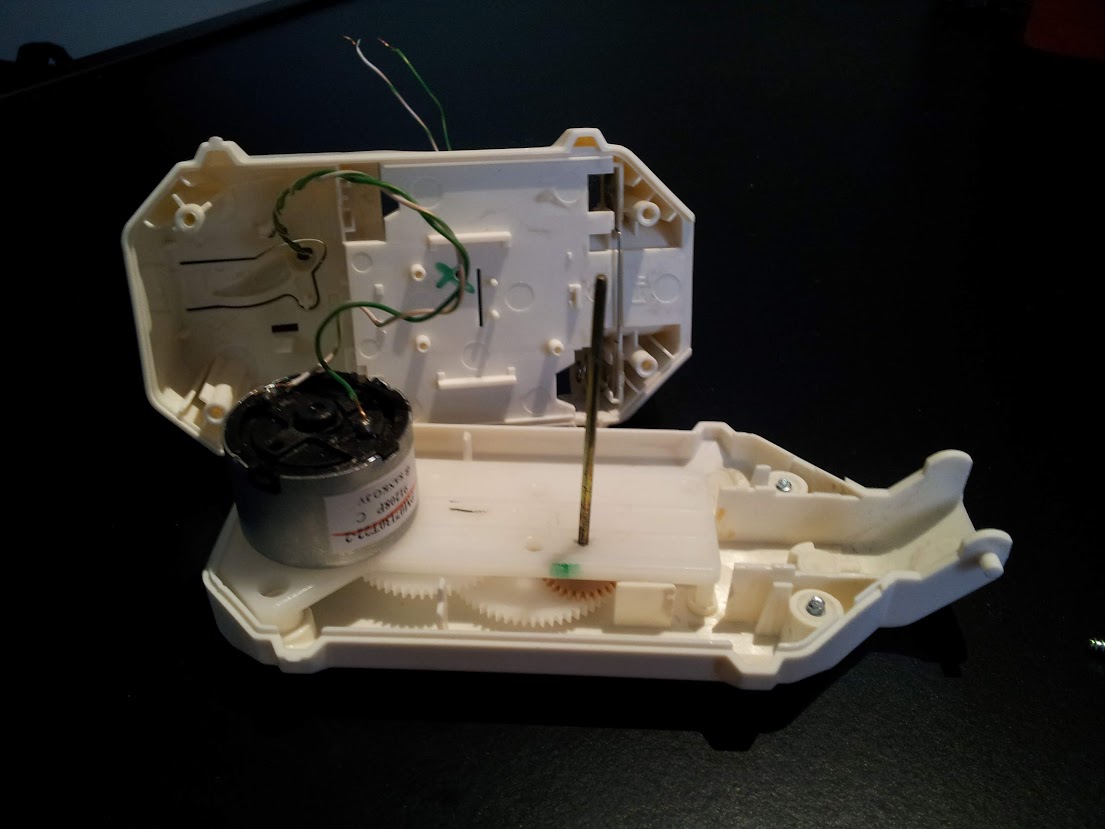

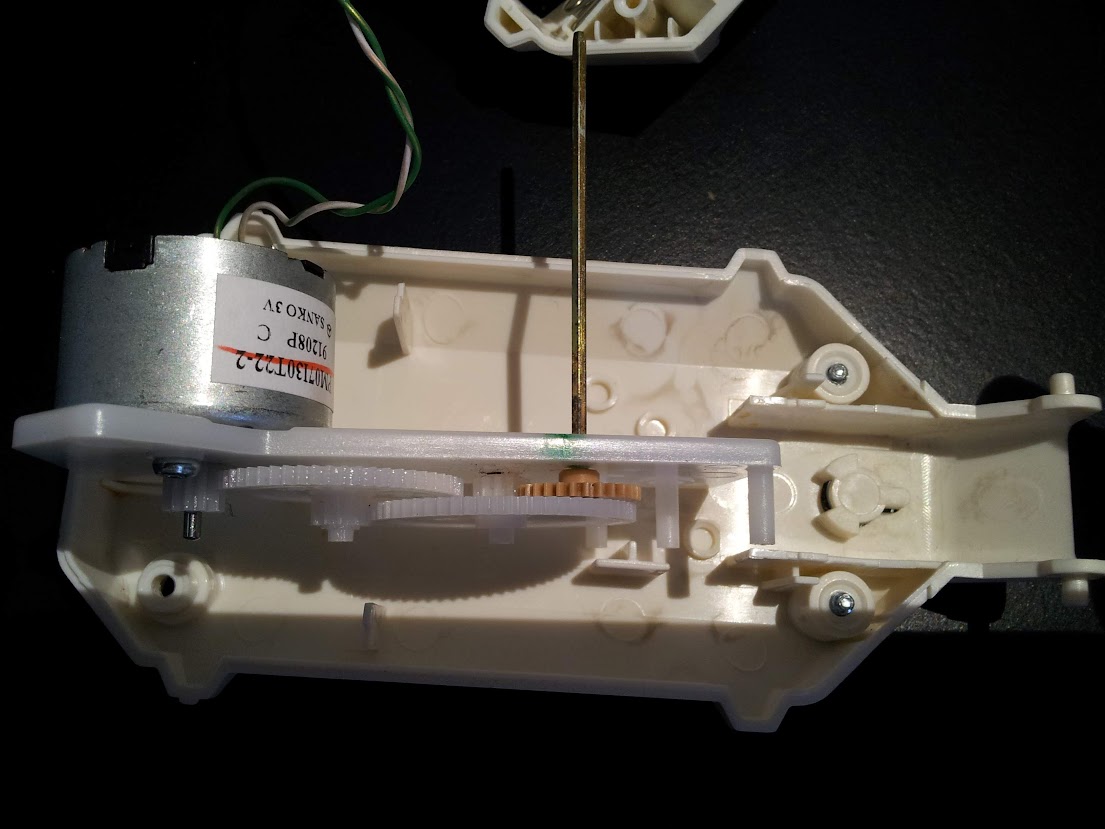

And here are some photos of the motor, to which I mounted an additional gear wheel.

Once the motor worked, I attached it to a webcam which i plugged into the PI, and then I joined the previous linked script with some GPIO scripting to achieve the goal, here is the result:

import RPi.GPIO as GPIO

import time,sys

import cv,os

from datetime import datetime

import Image

#GPIO pins i used

OUT0 = 11

OUT1 = 13

out0 = False #!enable line: when it's false, the motor turns, when it's true, it stops

out1 = False #!the direction the motor turns (clockwise or counter clockwise, it depends on the circuit you made)

def DetectFace(image, faceCascade):

min_size = (20,20)

image_scale = 2

haar_scale = 1.1

min_neighbors = 3

haar_flags = 0

# Allocate the temporary images

grayscale = cv.CreateImage((image.width, image.height), 8, 1)

smallImage = cv.CreateImage(

(

cv.Round(image.width / image_scale),

cv.Round(image.height / image_scale)

), 8 ,1)

# Convert color input image to grayscale

cv.CvtColor(image, grayscale, cv.CV_BGR2GRAY)

# Scale input image for faster processing

cv.Resize(grayscale, smallImage, cv.CV_INTER_LINEAR)

# Equalize the histogram

cv.EqualizeHist(smallImage, smallImage)

# Detect the faces

faces = cv.HaarDetectObjects(

smallImage, faceCascade, cv.CreateMemStorage(0),

haar_scale, min_neighbors, haar_flags, min_size

)

# If faces are found

if faces:

#os.system("espeak -v it salve")

for ((x, y, w, h), n) in faces:

return (image_scale*(x+w/2.0)/image.width, image_scale*(y+h/2.0)/image.height)

# the input to cv.HaarDetectObjects was resized, so scale the

# bounding box of each face and convert it to two CvPoints

pt1 = (int(x * image_scale), int(y * image_scale))

pt2 = (int((x + w) * image_scale), int((y + h) * image_scale))

cv.Rectangle(image, pt1, pt2, cv.RGB(255, 0, 0), 5, 8, 0)

return False

def now():

return str(datetime.now());

def init():

GPIO.setmode(GPIO.BOARD)

GPIO.setup(OUT0, GPIO.OUT)

GPIO.setup(OUT1, GPIO.OUT)

stop()

def stop(): #stops the motor and return when it's stopped

global out0

if out0 == False: #sleep only if it was moving

out0 = True

GPIO.output(OUT0,out0)

time.sleep(1)

else:

out0 = True

GPIO.output(OUT0,out0)

def go(side, t): #turns the motor towards side, for t seconds

print "Turning: side: " +str(side) + " time: " +str(t)

global out0

global out1

out1 = side

GPIO.output(OUT1,out1)

out0 = False

GPIO.output(OUT0,out0)

time.sleep(t)

stop()

#getting camera number arg

cam = 0

if len(sys.argv) == 2:

cam = sys.argv[1]

#init motor

init()

capture = cv.CaptureFromCAM(int(cam))

#i had to take the resolution down to 480x320 becuase the pi gave me errors with the default (higher) resolution of the webcam

cv.SetCaptureProperty(capture, cv.CV_CAP_PROP_FRAME_WIDTH, 480)

cv.SetCaptureProperty(capture, cv.CV_CAP_PROP_FRAME_HEIGHT, 320)

#capture = cv.CaptureFromFile("test.avi")

#faceCascade = cv.Load("haarcascades/haarcascade_frontalface_default.xml")

#faceCascade = cv.Load("haarcascades/haarcascade_frontalface_alt2.xml")

faceCascade = cv.Load("/usr/share/opencv/haarcascades/haarcascade_frontalface_alt.xml")

#faceCascade = cv.Load("haarcascades/haarcascade_frontalface_alt_tree.xml")

while (cv.WaitKey(500)==-1):

print now() + ": Capturing image.."

for i in range(5):

cv.QueryFrame(capture) #this is the workaround to avoid frame buffering

img = cv.QueryFrame(capture)

hasFaces = DetectFace(img, faceCascade)

if hasFaces == False:

print now() + ": Face not detected."

else:

print now() + ": " + str(hasFaces)

val = abs(0.5-hasFaces[0])/0.5 * 0.3

#print "moving for " + str(val) + " secs"

go(hasFaces[0] < 0.5, val)

#cv.ShowImage("face detection test", image)

Of course I had to play with timing to make the webcam turn well, and the timings like everything strictly connected to the motor depends on your specific motor and/or circuit.

Here’s the video (in italian):